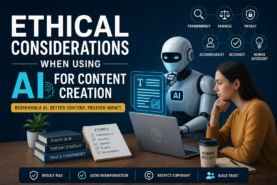

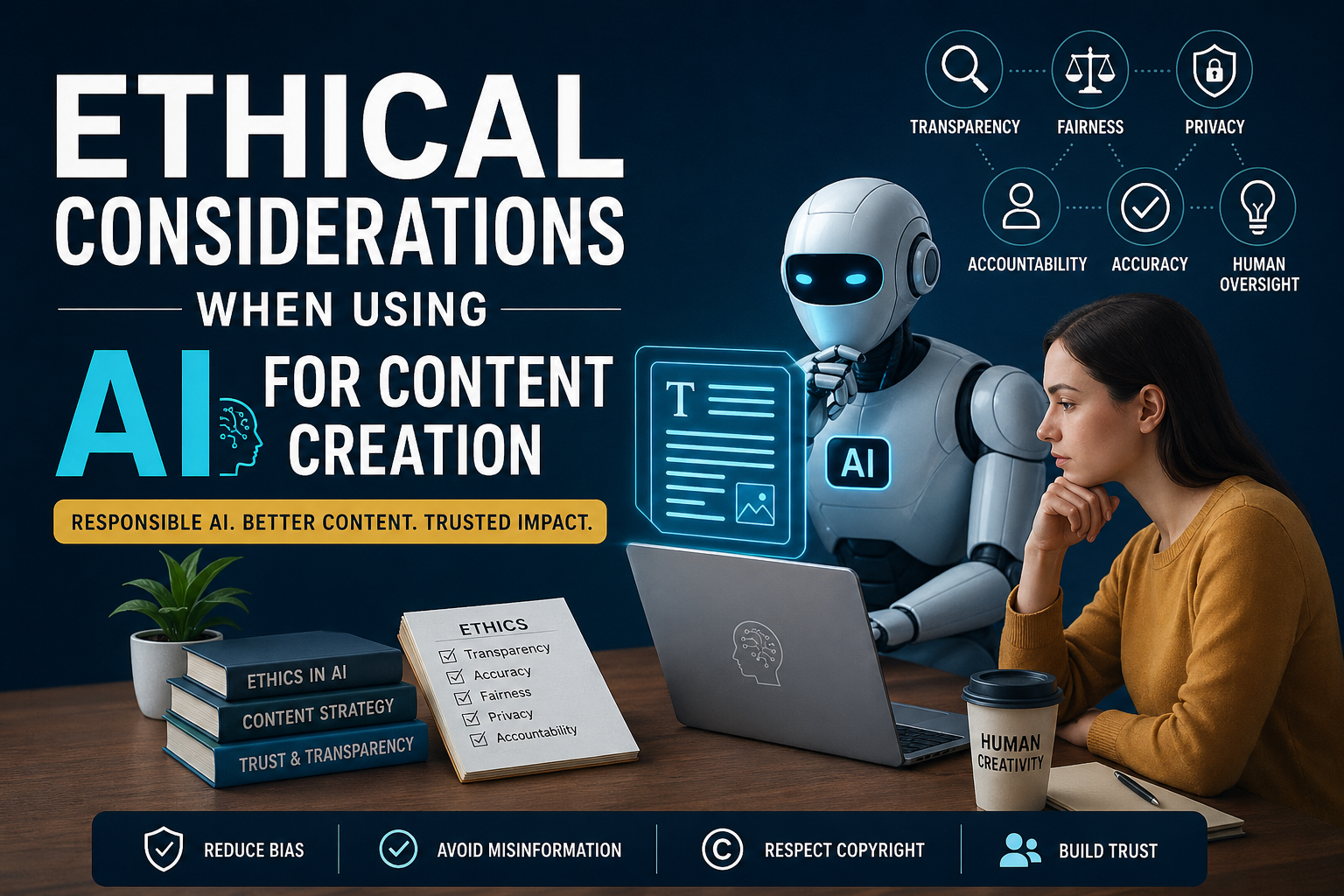

Artificial intelligence is reshaping how we create content, but with this innovation comes responsibility. Understanding AI content ethics in 2026 is essential for maintaining trust, ensuring accuracy, and avoiding legal or reputational risks.

From transparency to bias and copyright concerns, ethical AI use is now a core part of content strategy, not an afterthought.

What Is AI Content Ethics?

AI content ethics refers to the responsible use of artificial intelligence in creating digital content. It includes principles such as:

- Transparency

- Accuracy

- Fairness

- Accountability

- Respect for intellectual property

As AI tools become more advanced, ethical considerations are becoming stricter and more important.

Why AI Content Ethics Matters in 2026

The rapid growth of AI-generated content has led to:

- Increased misinformation risks

- Search engine scrutiny

- Audience trust issues

- Regulatory attention

Search engines now prioritize helpful, trustworthy, human-reviewed content, making ethical AI use a ranking factor.

1. Transparency and Disclosure

One of the biggest ethical questions is whether you should disclose AI generated content.

Best Practice:

Always inform readers when AI is used, especially in:

- News articles

- Product reviews

- Educational content

Example:

“This article was created with AI assistance and reviewed by a human editor.”

2. Avoiding AI Generated Misinformation

AI tools can generate incorrect or misleading information.

Risks:

- False facts

- Fabricated sources

- Outdated data

Solution:

- Fact check every claim

- Use trusted sources

- Add human verification

Accuracy is critical for SEO and credibility.

3. Bias in AI Content

AI systems can unintentionally reflect biases from training data.

Examples:

- Gender bias

- Cultural stereotypes

- Political skew

Ethical Tip:

Always review and edit AI outputs to ensure fairness and inclusivity.

4. Originality and Plagiarism

AI generated content must still be original.

Risks:

- Duplicate content

- SEO penalties

- Copyright issues

Best Practices:

- Use plagiarism checkers

- Rewrite and personalize content

- Add unique insights

5. Copyright and Ownership in AI Content

Who owns AI generated content is still a legal gray area in 2026.

Key Points:

- Fully AI generated content may not be copyright-protected

- Human input strengthens ownership claims

Recommendation:

Always edit AI content and add human creativity.

6. Human Oversight Is Essential

AI should assist, not replace humans.

Why It Matters:

- Ensures quality

- Maintains brand voice

- Prevents ethical issues

Think of AI as a co pilot, not the driver.

7. Data Privacy and Security

Using AI tools may involve sharing sensitive data.

Risks:

- Data leaks

- Misuse of information

Best Practices:

- Avoid entering confidential data

- Use trusted AI platforms

- Review privacy policies

8. Over Reliance on AI

Too much automation can reduce creativity and originality.

Warning Signs:

- Generic content

- Lack of unique voice

- Repetitive ideas

Solution:

Blend AI efficiency with human creativity.

Ethical Guidelines for AI Content Creation

Follow these principles to stay compliant and competitive:

- Be transparent about AI use

- Fact-check all content

- Ensure originality

- Monitor bias

- Protect user data

- Maintain human oversight

The Future of AI Content Ethics

In 2026 and beyond, expect:

- AI content regulations

- Mandatory disclosures

- AI watermarking systems

- Improved detection tools

Ethical AI use will become a standard requirement, not a competitive advantage.

Conclusion

AI is transforming content creation, but ethical responsibility must guide its use. By focusing on transparency, accuracy, and human oversight, you can create content that is not only efficient but also trustworthy and future proof.

This article was created with AI assistance and reviewed by a human editor.

4 Comments

This is an incredibly timely breakdown for 2026. As we move into an era of stricter AI regulations and the EU AI Act, the focus on “accountability” over just “efficiency” is spot on.

In my experience, the biggest risk for creators right now isn’t the AI itself, but the “set it and forget it” mentality. In a world flooded with synthetic content, maintaining a “Human-in-the-loop” model is the only way to protect brand authority and ensure ethical integrity. I’ve found that using AI for the heavy lifting of research—while keeping 100% control over the final voice and verification—is where the real value lies.

Quick question: With the new disclosure guidelines coming into play this year, do you think we will reach a point where “Human-Verified” labels become as common and essential as SSL certificates for building reader trust?

That’s a really sharp way of framing it, and you’re right to connect this directly to where regulation is heading.

Short answer: yes, “Human-Verified” (or something very close to it) is likely to become common, but not in the exact way SSL certificates did. It’ll emerge more as a trust signal layer on top of mandatory disclosure, not a universal technical standard, at least not immediately.

I really enjoyed this perspective. AI isn’t just about speed, it’s about responsibility too. I think a lot of people overlook things like accuracy, bias, and transparency when creating content. When used intentionally, AI can support creativity, but it shouldn’t replace the human voice behind it.

Do you think most creators are thinking about ethics yet, or are they still focused mainly on output?

That’s a sharp observation, and honestly, most creators are still prioritizing output over ethics right now.

We’re in a phase where AI feels like a competitive advantage, so the focus is often on speed, volume, and staying relevant. That pressure tends to push questions like accuracy, bias, and transparency into the background. Not because creators don’t care, but because the incentives (algorithms, monetization, visibility) reward more content, not necessarily better or more responsible content.

That said, there’s a shift starting.

More experienced creators, and especially those building long-term brands, are beginning to realize that trust is an asset. If audiences start questioning whether content is misleading, generic, or overly automated, it erodes credibility fast. And once that trust is gone, it’s hard to rebuild.

So right now it’s a bit of a split:

Early-stage or growth-focused creators – mostly focused on output and efficiency

Established or brand-focused creators -increasingly thinking about ethics, transparency, and originality

Long-term, ethics will likely become a differentiator. The creators who are open about how they use AI, fact-check their work, and keep a clear human perspective in their content will stand out more as the space gets saturated.

In a way, we’re moving from “AI as a shortcut” to “AI as a tool you’re accountable for.”

I’m curious, when you think about ethical use, which concern stands out most to you: accuracy, bias, or transparency?